Fastest & Cheapest

CI Runners

Run GitHub Actions and GitLab CI pipelines smarter. Stop wasting money on idle runners and jobs — our Spike Instances scale to fit your workload in real time.

As your business grows,

slow CI and rigid billing inflate costs

Rigid Billing

You’re charged for time, not usage: costs become unpredictable as you scale.

Slow CI

Heavy workloads clog up CI pipelines, stalling builds and slowing teams down.

Wasted Cloud Spend

You’re paying for idle resources that your pipelines don’t actually use.

No Clarity

It's hard to optimize when you can’t see where your CI resources are going.

Finally, Compute Platform

That Solves the Real Problems

High-performance by default

Get rocket-fast builds with generous CPU and memory limits — no tuning needed.

Fair, load-based billing

Every second, we track each job’s CPU and memory load. Only pay for load, idle CPU is free.

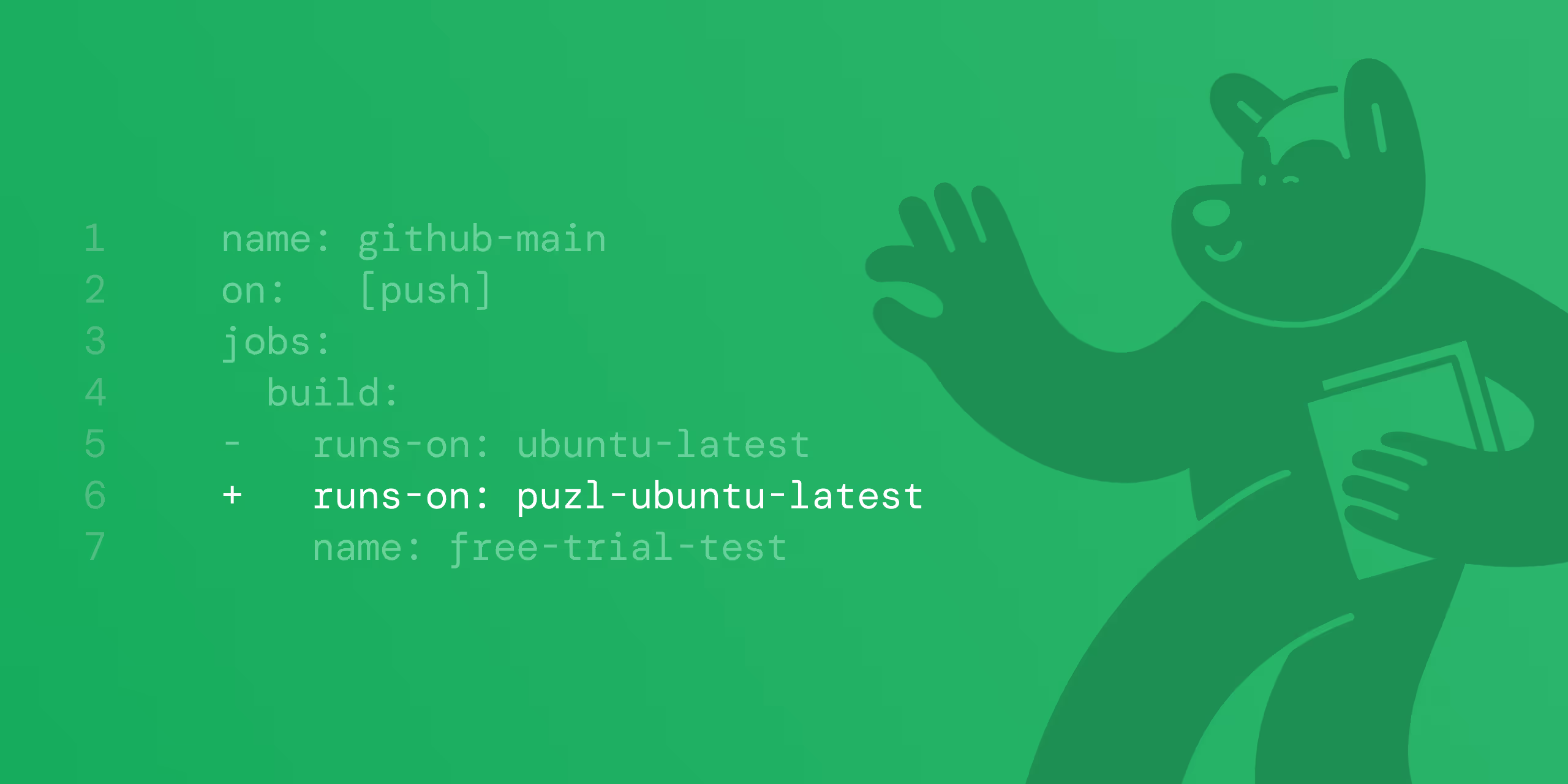

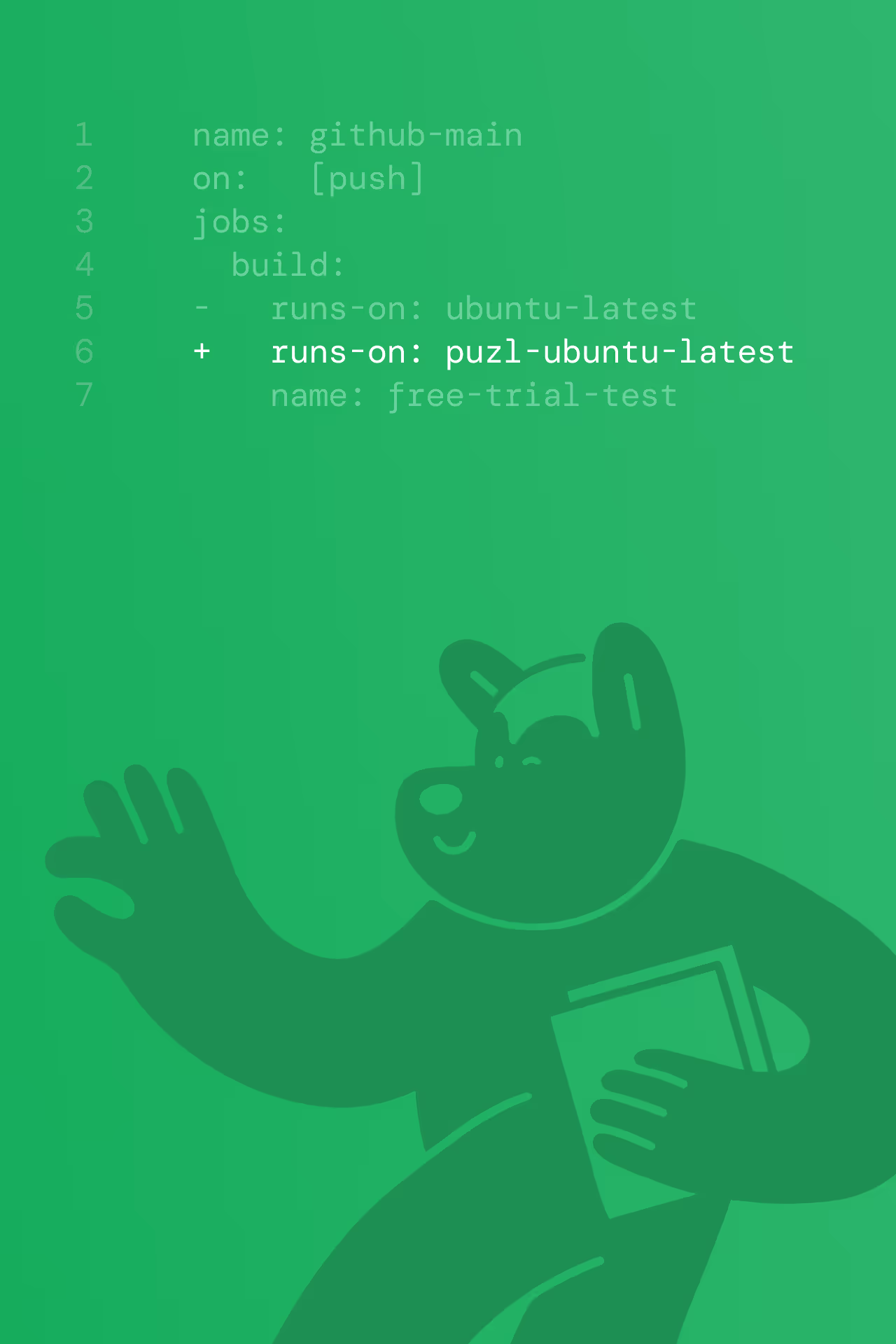

Seamless integration

Plug into your existing setup in minutes — no vendor lock-in, no headaches.

Security and speed

are not a trade-off

Spike Instances: Mighty MicroVMs

Every job spins up a clean KVM-based instance that delivers near bare-metal performance and strong tenant isolation.

Read moreEphemeral filesystem

Every job mounts a dedicated filesystem — a high-performance, on-demand storage that vanishes on completion, ensuring zero leftover data.

Read more